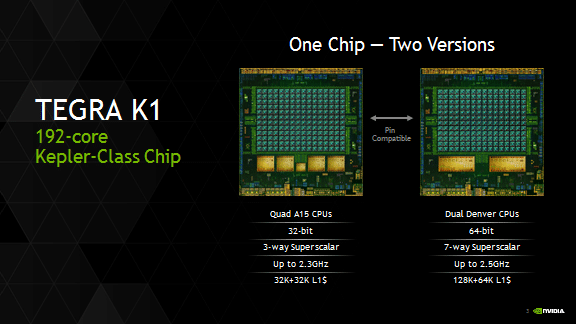

The Hot Chips 2014 conference conveyed some hot information this week about Nvidia’s 64-bit Tegra K1 -the first 64-bit ARM processor for Android devices that pairs the dual-core “Project Denver” CPU with Nvidia’s 192-core Kepler GPU (a ceepee geepee). The ARM-based Denver CPU was custom designed by Nvidia and is compatible with ARM’s 64-bit ARMv8-A architecture. The chip is also pin-compatible with the 32-bit Tegra K1 chip, enabling products that will run either chip without any tweaking needed. Think ARM64 Jetson boards, Shield Tablets, and Chromebooks. Nvidia claims exceptional performance and superior energy efficiency over other ARM-based mobile processors.

Originally intended for servers, Nvidia launched Project Denver between 3-5 years ago. The recent Cirrascale server RM1905D is one instantiation of that effort that combines ARM64 with GPUs as shown in the graphic below .

In June the Wall Street Journal reported that Nvidia (along with Samsung) have decided to shy away from the server chip battle and are now focusing the latest 64-bit Tegra chips (Tegra K1) on smartphones, tablets, cars and other embedded devices. According to seekingalpha.com, Nvidia believes the mobile K1 chip could make it to microservers, byt has scrapped any near-term plan to develop a specialized server CPU. Nvidia derives over 10% of its valuation from the Tegra division with Tegra processors revenue expected to reach $1.5 billion driven by three key growth drivers – mobile devices, automotive electronics and gaming systems. Rather than building its own server processors, Nvidia will supplement ARM processor-based servers with high-performance GPUs. The Cirrascale offering appears to confirm this strategy and is in-line with the 2013 comment by Sumit Gupta, General Manager of the Tesla Accelerated Computing business unit at NVIDIA that, “We think GPU accelerators are going to be, in effect, the floating-point units for ARM processors.”

Qualcomm has been working on server chips but has not announced any plans to introduce them. Other companies that have announced plans for ARM-based chips for servers include Applied Micro Circuits, AMD, Broadcom, Cavium, Texas Instruments and Marvell Technology Group.

Nvidia expects its partners to launch mobile devices based on its 64-bit Tegra K1 later this year. The company is currently developing the next version of Android L – which caters directly to enterprise concerns for Android– on the 64-bit Tegra K1. The combination of low-power, high-performance, 64-bit capability means Nvidia will be able to further expand into a multitude of large markets where visual computing matters, such as auto navigation systems, TV set top boxes and new desktop form factors like all-in-ones, clip-on, smart monitors, and others.

The Nvidia blog contains more details of the Nvidia ARM64 processor.

-

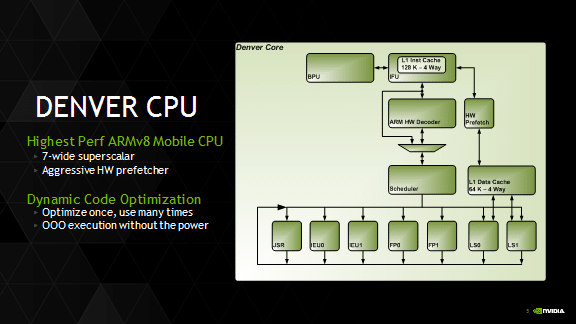

Each of the two Denver cores implements a 7-way superscalar microarchitecture (up to 7 concurrent micro-ops can be executed per clock), and includes a 128KB 4-way L1 instruction cache, a 64KB 4-way L1 data cache, and a 2MB 16-way L2 cache, which services both cores.

-

Denver implements an innovative process called Dynamic Code Optimization, which optimizes frequently used software routines at runtime into dense, highly tuned microcode-equivalent routines. These optimized routines are stored in a dedicated, 128MB main-memory-based optimization cache where they are executed, re-fetched and executed from the instruction cache as long as needed and capacity allows. Nvidia claims, “Dynamic Code Optimization works with all standard ARM-based applications, requiring no customization from developers, and without added power consumption versus other ARM mobile processors.“

In our opinion, Dynamic Code Optimization sounds suspiciously like the generation of microcode “kernels” that can potentially be executed in parallel either on ARM Cores or, depending on the magic of LLVM, potentially on the Kepler GPU. Time will tell if that suspicion is bourne out in truth!

Leave a Reply