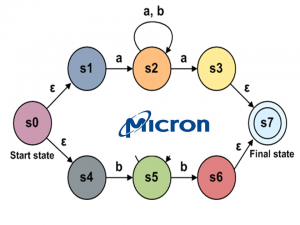

Adding computation to memory is a fantastic way to accelerate applications and real-time solutions. Content addressable memory (CAM) is a widespread and compelling example of how hardware can speed table lookups. (Most virtual memory computers utilize CAM to perform page lookups.) Micron recently announced the Automata Processor (AP) that implements an NFA (Non-deterministic Finite Automata in silicon to provide high-speed, comprehensive search and analysis of complex, unstructured data streams. Micron stresses that the AP (Automata Processor) is not a memory device, but rather is a memory based device that leverages the intrinsic parallelism of DRAM to answer questions about data as it is streamed across the chip. Micron believes the AP is designed to address a class of problems for which neither CPUs or GPGPUs are well suited.

Analysis

Reading the paper linked in the announcement, the Micron AP is definitely an interesting product. The automata processor appears to be a next step in Micron’s efforts to provide high-speed bread-and-butter Internet infrastructure. For example, these devices could provide a very fast way for routers to pick paths through the Internet, and event to identify IP addresses. Micron is clearly working to stay a technology leader with the AP and their membership in the Hybrid Memory Consortium. Micron has been shipping samples of stacked DRAM chips (Hybrid Memory Cubes) for routers for a couple of years. It is likely that some form of stacked memory will probably revolutionize CPU and GPU memory systems. See my Scientific Computing article “Big Money for Big Data” for more information. The NVIDIA Volta announcement confirms the potential of stacked memory as well. As a computer scientist, I’d love to see how the Micron AP performs on graph algorithms. Two topics of special interest are:

- We need to see how the AP communications fabric enables or limits performance. It is really challenging to implement a communications fabric that does not rely on locality. Unfortunately, locality is not something one can rely on in unstructured data.

- How “randomly” does the silicon choose pathways?

In thier paper, the Micron engineers validated the communications fabric with some unspecified workloads. They wrote:

The routing matrix is a complex lattice of programmable switches, buffers, routing lines, and cross-point connections. While in an ideal theoretical model of automata every element can potentially be connected to every other element, the actual physical implementation in silicon imposes routing capacity limits related to tradeoffs in clock rate, propagation delay, die size, and power consumption. From our initial design, deeply informed by our experience in memory architectures, we progressively refined our model of connectivity until we were satisfied that the target automata could be compiled to the fabric and that our performance objectives would be met.

We will see if the Micron engineers chose a set of workloads that conform what users expect in their applications.

Bottom line: NFA’s, (Non-deterministic Finite Automata) are powerful tools for string matching and regular expression evaluation. We will to see if the Micron implementation realizes the power of the computer science theory. Beyond that, we will see if developers and systems administrators are up to the task of working with and supporting non-deterministic hardware.

For more information: TechRadar also has an article on the Micron AP processor.

Leave a Reply