On January 24th, SanDisk announced shipments of ULLtraDIMM SSD storage in concert with an IBM announcement rebranding the SanDisk ULLtraDIMMs as eXFlash DIMMs. On March 21, SanDisk’s stocks hit a 14-year high.

ULLtraDIMM SSD storage puts Flash memory in a standard DIMM form factor that can be plugged into a memory socket. The Linux, Windows, or VMware UEFI/BIOS recognizes the MCS modules as specialized devices that are controlled by the MCS driver. The MCS driver then manages use of those modules as primary storage or as a memory extension.

With ULLtraDIMM storage, the customer gains 200 GB – 400 GB per DIMM of non-volatile secondary storage that can fit on a blade or inside a server. Direct access (although not currently byte addressable) across the memory bus provides high read/write bandwidth (760 MB/s – 1 GB/s) of low-latency (150 µsec read, less than 5 µsec write) storage. While perfect for video capture, databases, and virtualized servers (as evidenced by the initial Diablo Technology OS support), this performance is achieved at the loss of one or perhaps a pair of DIMM slots depending system architecture.

How does ULLtraDIMM compare against the adage, “Real Memory for Real Performance”?

For storage dominated workloads, DIMM based flash storage has several advantages that make the Sandisk/IBM products an attractive option even with the loss of RAM capacity:

- A terabyte or three of local NAND storage per blade eliminates the need to access data across the network interface, which removes network jitter, can improve overall system performance, and allow customers to better realize the full scalability of their blade architecture.

- Eliminating much of the OS and all of the PCIe and network jitter means that small yet very high capacity data capture devices (think video or A/D converters) can be designed according to very tight QoS (Quality of Service) agreements.

In comparison, a high-performance RAID system built out of SSD devices can achieve around 12 Gb/s of performance, but with a higher latency. Thus for streaming or other latency tolerant designs where there is space for a RAID controller and SSDs, the loss in potential RAM capacity might swing the design decision towards a more traditional storage architecture.

For the right design verticals, ULLtraDIMM storage provides compelling arguments. For example, the VMware support makes ULLtraDIMM storage modules perfect for hosting virtual machine services (like WordPress) that share a single OS image and utilize a local MySQL database. This is undoubtedly one reason why Big Blue is so bullish on the SanDisk products … because they will sell blade servers. It also reinforces IBM’s committment of $1B to further develop Flash technologies.

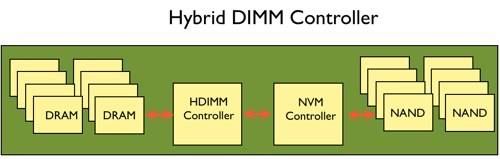

Micron offers a competitive Hybrid DIMM controller that contains both RAM and Flash on the same device (click on the image below) so system integrators don’t have to sacrifice all the capacity of one or a pair of DIMM slots. In the Micron product, data moves dynamically between the two memory types according to demand where the most frequently used data resides in the fastest memory. Think of a mmapped region of memory that has an uber fast path to storage that is not subject to software or CPU overhead.

Leave a Reply